Outages happen, and they happen everywhere. Whether you leverage a public cloud, a hosting provider, or your own data center, infrastructure downtime is inevitable. Equipment breaks or does not function as expected, software bugs slip by, natural disasters occur, and unforeseen situations lead to unexpected consequences. Sometimes services are degraded and sometimes complete data centers go dark.

In the last few months a number of public cloud outages have raised the question of whether the cloud is reliable enough to run business-critical environments. To try to answer that question, we looked at some data points on recent outages.

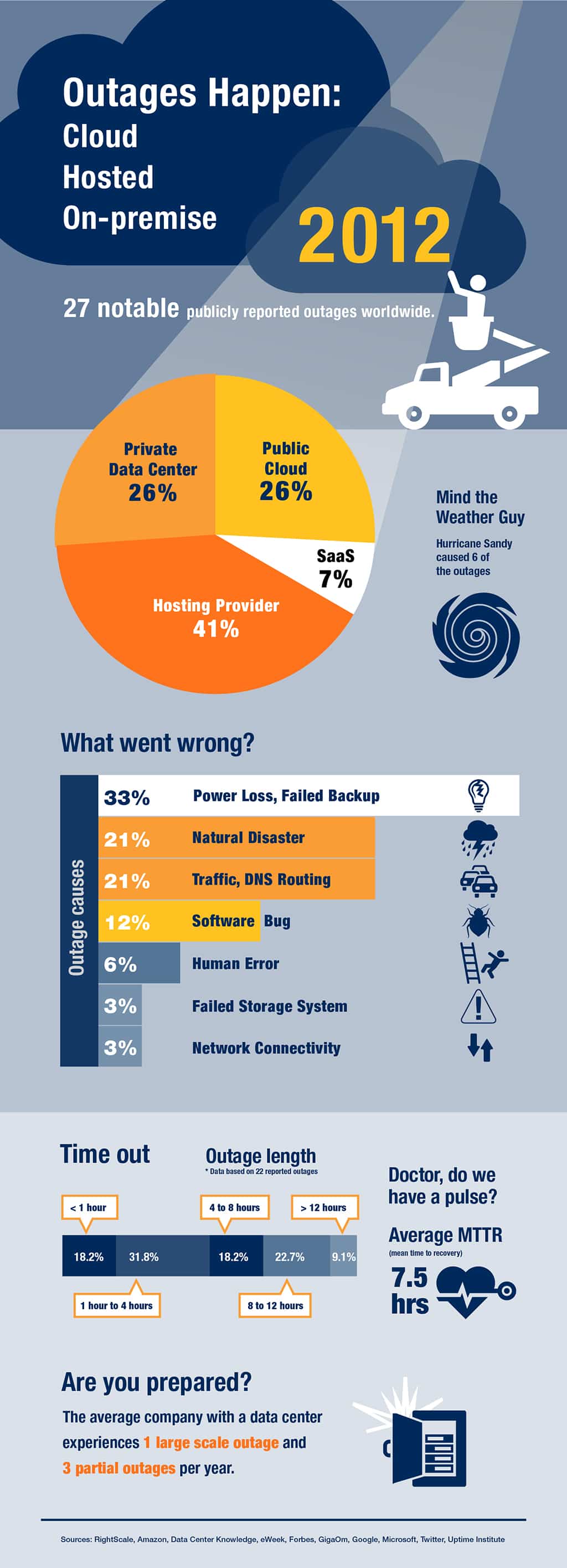

We scoured the Internet for publicly reported data center or cloud outages in 2012. We found 27 notable outages; many more were probably never reported in the news because they affected a single company and never were reported publicly. We classified the outages into four categories: public cloud (IaaS), SaaS, third-party hosting provider, and private corporate data center. Of the outages we found, 26 percent were in public clouds and 67 percent were either private data centers or hosting provider. Power loss, Hurricane Sandy, and other natural disasters were the biggest culprits.

What do we learn from looking at the numbers? First, any data center will eventually fail, whether public cloud, private corporate data center, or third-party hosting providers. Any single piece of equipment can fail, and sometimes cascading events can make an entire data center unavailable. According to a report from the Uptime Institute, on average a data center will experience one major outage and three partial outages each year.

Second, when outages do occur, the impact can be significant. In the outages we studied, the average downtime was 7.5 hours. When we looked at just public cloud outages, the average downtime was only marginally higher at 7.7 hours. In either case, the resulting business impact can be high, with significant revenue impact.

The key takeaway is the need to architect your applications to stay up even when your cloud or data center isn’t.

Companies have long implemented high availability (HA) features and disaster recovery (DR) processes. However, the advent of cloud computing now demands new approaches and offers new options for outage-proofing your applications.

Cloud Extends the Concept of HA

First let’s take a short look at what it used to be like to set up an HA architecture before the cloud. The basic idea is to have n+1 units available for every type of component so that no single point of failure brings down your environment. In a classic three-tier architecture in a data center, that means dual routers, dual switches, dual load balancers, at least two application servers, and two database machines, each with a RAID for storage. You would also want redundant power drops into your data center and two network connections as well.

Now let’s compare this to what you can do with cloud computing. Most public clouds let you launch instances in multiple regions and availability zones or groups (AZ). Each region is in a discrete geographic location, such as the east coast of the US or Europe. Individual AZs live within each region, and each AZ is meant to be completely isolated from all the others — a shared-nothing architecture — so that a failure of one should not impact the others, while being only single-digit milliseconds of network latency away. This structure means that you can design HA architectures that are resilient to data center outages without having to deal with the significant cost and complexity of managing every aspect of the physical infrastructure on your own.

A modest yet fairly redundant HA environment may run on a single AWS region, but span two AZs. Think what a comparable environment would take to build with your own infrastructure. You would need two complete and separate data centers with great network performance between them, and a VPN.

Thanks to cloud computing, environments like this are not difficult to build anymore. To provision a server in a different availability zone, you simply choose an item from a drop-down menu. Contrast that with what it is like to have to order equipment, ship it to a distant data center, and take a long drive or flight to install it. Many companies simply take shortcuts in their HA/DR architecture because of the cost and effort involved. Leveraging the cloud can reduce those disaster recovery costs and increase the uptime you can expect from your environment.

Cloud Management

Take control of cloud use with out-of-the-box and customized policies to automate cost governance, operations, security and compliance.

Most of us are creatures of habit, and we tend to go back to what we already know. Maybe this is why you keep hearing about companies that have been impacted by an AWS outage because they have relied on a single AZ. I have talked with dozens of companies in the last few years and I can confirm this is a common error. I think that is because many companies don’t realize how cloud computing can reduce the previously prohibitive cost of creating truly HA environments with geographic redundancy.

Mind you, there are also some very public success stories from companies that do not go dark when AWS has a problem and an AZ goes out. If you are building an environment for the cloud, it’s time to re-examine old assumptions about the cost and effort of HA/DR and take advantage of the capabilities cloud affords you. One of our senior cloud architects explains it like this: You can have great tools, but if you are not a good carpenter, a stiff wind will knock down your house. In the cloud, you cannot depend on the infrastructure layer alone, because the infrastructure is bound to fail eventually.

The real issue is not whether data centers will go out or how long they will stay dark, but rather how prepared you are to deal with that eventuality. Cloud computing gives you a radically simpler and lower cost option that traditional data centers can’t match. Learn how RightScale can help you manage your data and applications in the cloud.